Superfact 88: The history of artificial intelligence (AI) began in antiquity, with stories of artificial beings. The first artificial neural network model was created in 1943. The Turing test was created in 1950. The field of “Artificial Intelligence Research” was founded as an academic discipline in 1956. The first trainable (able to learn) neural network was demonstrated in 1957.

Since then, artificial intelligence has come a long way. Did you hear about the computer that defeated the reigning world champion in chess? A computer finally defeated the supreme human intellect in the world in an intellectual field. Is this the end of humanity? Oh, wait, that was in 1997.

The various recent launches of large language models such as ChatGPT, Gemini, Claude, Llama, Deep Seek, etc., have impressed many people but also fooled many people into thinking that Artificial Intelligence is a new invention. It is not. Artificial Intelligence has been around for a long time, and its past is filled with many success stories as well as disappointments. Click here to see a timeline for Artificial Intelligence stretching from antiquity to 2025. For additional sources click here, here, here, or here.

I consider this a super fact because it is true, kind of important, and based on my personal experience I believe that the long old history of Artificial Intelligence is a surprise to many.

My Personal Experience with Artificial Intelligence

In 1986, when I was in college in Sweden, I took a class in the LISP programming language. LISP was the first Artificial Intelligence programming language, and it was invented in 1958. In 1987, as a university level exchange student, I took a class called Artificial Intelligence at Case Western Reserve University. The book we used was Artificial Intelligence by Elaine Rich published in 1983. This book and the course were focused on decision trees and rule based algorithms and did not even mention neural networks.

That same year I also took a class called Pattern Recognition which introduced neural networks to me. In 1986 a landmark paper was published by David Rumelhart, Geoffrey Hinton, and Ronald Williams which introduced the Rumelhart backpropagation algorithm. Geoffrey Hinton received the Nobel Prize in physics in 2024. David Rumelhart and Ronald Williams were both dead and could therefore not receive the Nobel Prize. The Nobel Prize was also given to John J. Hopfield, another pioneer in neural networks. He invented the Hopfield network. You can read more about neural networks and the Nobel Prize in physics in 2024 here.

The Rumelhart backpropagation algorithm was a giant leap forward for neural networks and for Artificial Intelligence and it is the algorithm used by ChatGPT and the other large language models. Geoffrey Hinton is often interviewed in media and often presented as the father of Artificial Intelligence. He is not, but he is responsible for arguably the greatest leap forward in neural networks, as well as Artificial Intelligence.

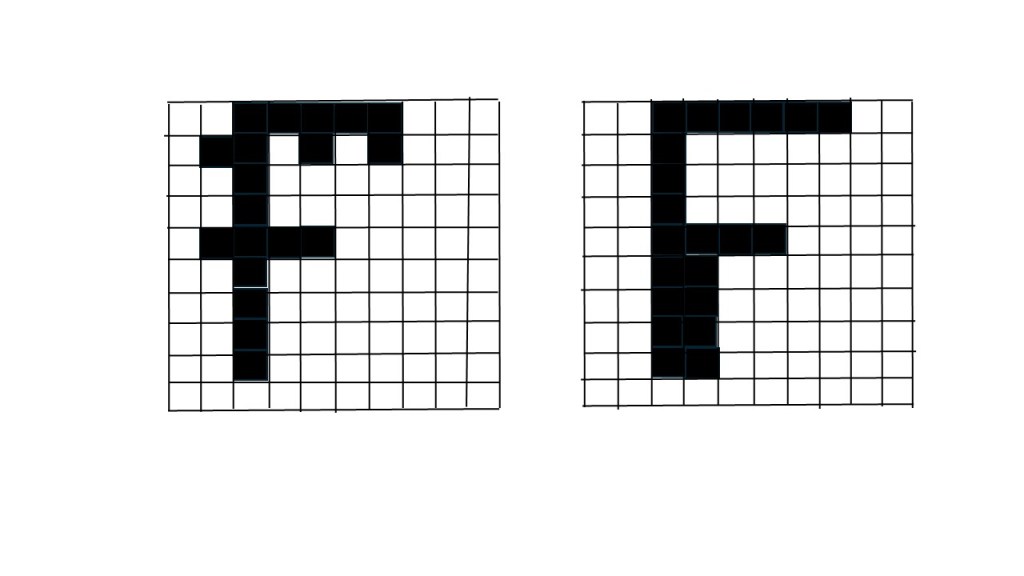

In class we used the Rumelhart backpropagation algorithm to read images with text. It is one thing to type in a character on a keyboard and quite another to have a computer identify a character in an image. We trained our primitive neural networks to recognize images of letters using the Rumelhart backpropagation algorithm. We coded the backpropagation algorithm using the C programming language over perhaps 100 neurons/parameters and a few hundred synapses/weights (in AI). It worked pretty well. In comparison, ChatGPT 4 is estimated to have 1 trillion neurons/parameters. Our class was among the first in the world to try out this, at the time, new algorithm and at the time I did not realize the importance of it.

Later I did research and I worked in the field of Robotics where I implemented various Artificial Intelligence algorithms but not neural networks. I have a PhD in Applied Physics and Electrical Engineering with specialty in Robotics. At my next workplace Siemens I used decision tree algorithms, also Artificial Intelligence but not neural networks.

What is a Neural Network

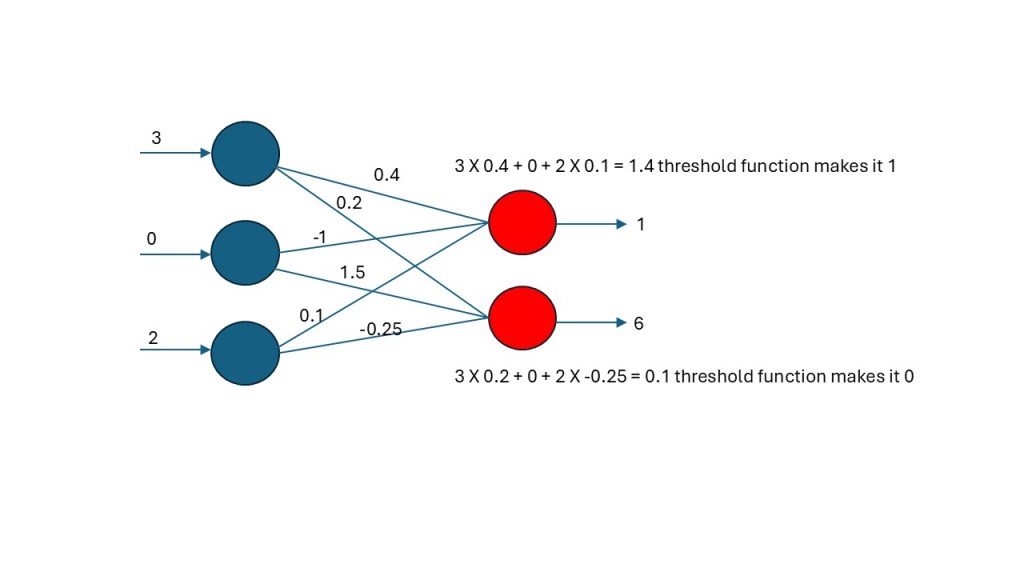

The first neural networks created by Frank Rosenblatt in 1957 looked like the one above. You had input neurons and output neurons connected via weights that you adjusted using an algorithm. In the case above you have three inputs (2, 0, 3) and these inputs are multiplied by the weights to the outputs. 3 X 0.2 +0 + 2 X -0.25 = 0.1 and 3 X 0.4 + 0 + 2 X 0.1 = 1.4 and then each output node has a threshold function yielding outputs 0 and 1.

To train the network you create a set of inputs and the output that you want for each input. You pick some random weights and then you can calculate the total error you get, and you use the error to calculate a new set of weights. You do this over and over until you get the output you want for the different inputs. The amazing thing is that now the neural network will often also give you the desired output for an input that you have not used in the training. Unfortunately, these neural networks weren’t very good, and they sometimes could not even be trained.

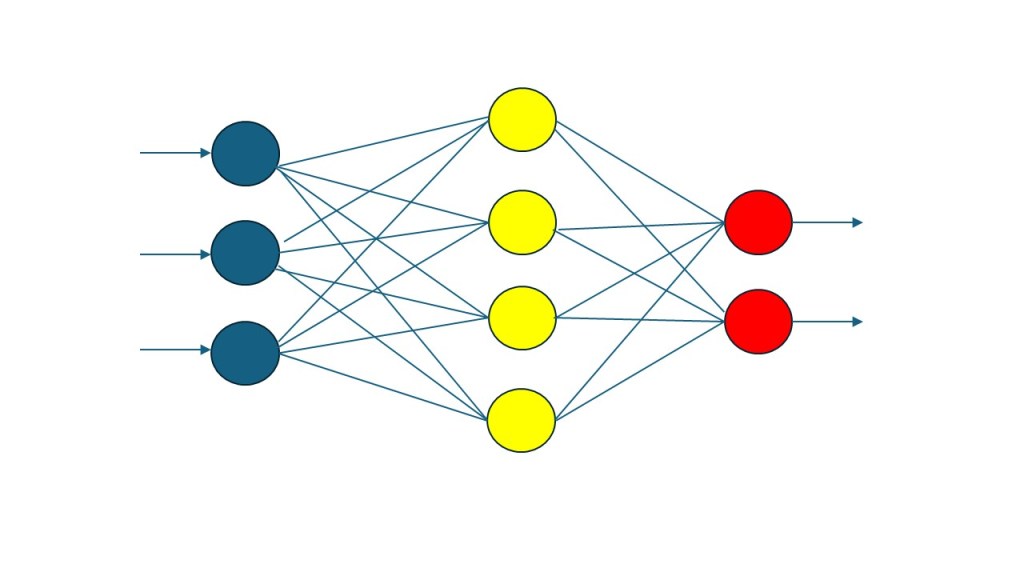

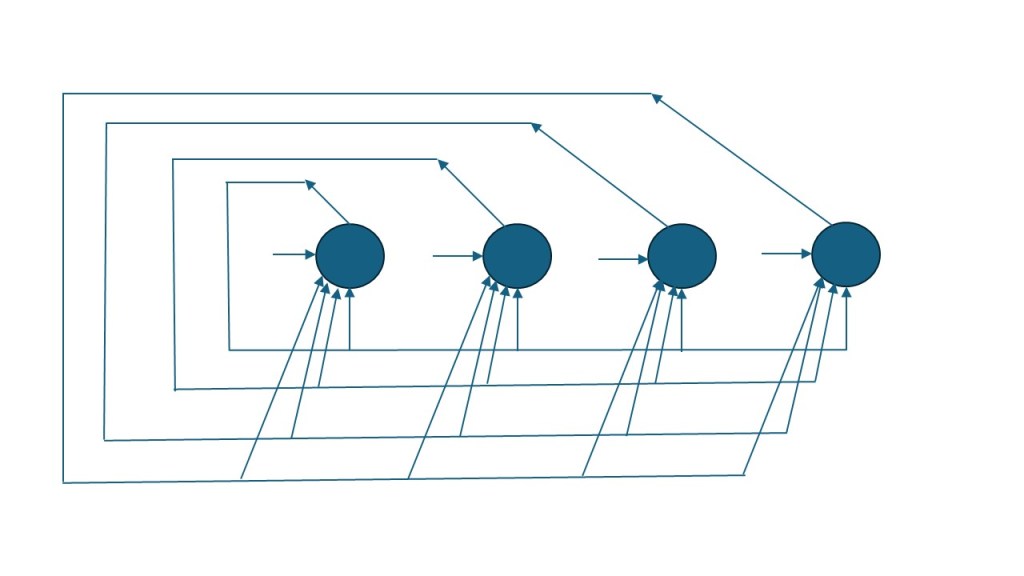

As mentioned, in 1986, Geoffrey Hinton, David Rumelhart and Ronald J. Williams presented the Rumelhart backward propagation algorithm which were applied to a neural network featuring a hidden layer (at least one hidden layer). It was effective and it was guaranteed to learn patterns that were possible to learn. It set off a revolution in Neural Networks. In the network below you also use the errors in a similar fashion as in the Rosenblatt network. However, the combination of a hidden layer and the backpropagation algorithm make a huge difference.

Below I am showing two 10 X 10 pixel images containing the letter F. The neural network I created in class (see above) had 100 inputs, one for each pixel, a hidden layer and then output neurons corresponding to each letter I wanted to read. I think I used about 10 or 20 versions of each letter during training, by which I mean running the algorithm to adjust the weights to minimize the error until it is almost gone. Now if I used an image with a letter that I had never used before, the neural network typically got it right even though the image was new.

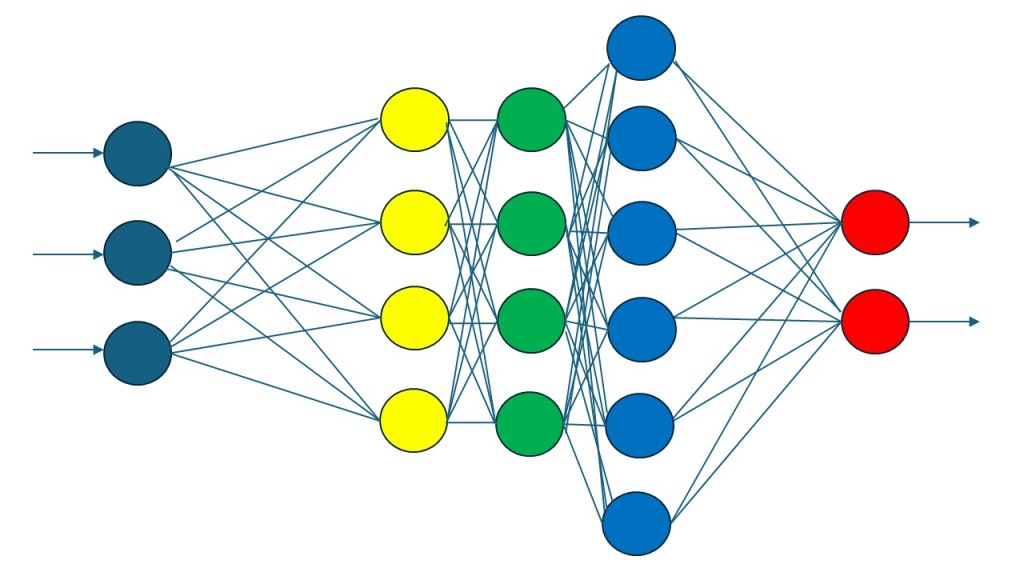

At first, it was believed that adding more than one hidden layer did not add much. That was until it was discovered that by applying the backpropagation algorithm differently to different layers created a better / smarter neural network and so at the beginning of this century the deep learning neural networks were born (or just deep learning AI). I can add that our Nobel Prize winner Geoffrey J. Hinton was also a pioneer in deep learning neural networks.

I should mention that there are many styles of neural networks, not just the ones I’ve shown here. Below is a network called a Hopfield network (it was certainly not the only thing he discovered).

For your information, ChatGPT-3.5 and ChatGPT-4 are deep learning neural networks, like the one in my colorful picture above, but instead of 3 hidden layers it has 96 hidden layers in its neural network and instead of 19 neurons it has a total of 176 billion neurons.

The Dark Side of AI

The potential harm of AI is a related and important topic that I did not address. However, this is already a very long and complex post, and I don’t know enough about this topic (yet). To read more about this topic check the comments made by “Grant at Tame Your Book” (in comment section). Better, Grant wrote and excellent, well research and professional post about this issue called Don’t Confuse AI with a Benign Tool. Please check it out.

It’s refreshing to read a post that puts today’s excitement into perspective. The author’s personal experience adds credibility and reminds us that the current wave builds on a long history of research.

LikeLiked by 2 people

Thank you so much Myrela. You are right. We are very excited about Artificial Intelligence right now and for good reasons, but it has a long history. It didn’t just suddenly happen. Most of the recent successes comes more from more computer power than any recent inventions.

LikeLiked by 2 people

Thanks for your very excellent and comprehensive overview of AI, Thomas. I knew that it certainly isn’t new but my knowledge of its background is at best very basic. Your piece adds a lot of meat to the bone.

LikeLiked by 2 people

Thank you so much for your very kind words Lynette. It has been a lot of talk about it lately for good reasons. Faster and more powerful super computers have helped launch a commerical revolution but it is easy to forget that concepts, the visions and goals, the algorithms, and the inventions have for the most part been around a very long time.

LikeLiked by 2 people

Thank you, Thomas, for your factual detail of what’s used to create AI models.

However, please give equal information about the harm caused by the unregulated AI industry. Offer examples of how the many AI models created by greedy Big Tech have stolen copyrighted material, killed creativity, and destroyed reputations. Sometimes, leading people to commit suicide.

For example, please show how people can suffer from AI-related psychosis. Give equal time to how predators and pornographers are using AI to create sexualized images of underage school children based on photos snatched from social media. Devote information to how these tools are used to stalk people, especially women.

Also, please list the many instances where AI replaced actual learning, dumbing down society instead of creating the advertised utopia of universal knowledge. Share how large language models (LLMs) contain vast amounts of erroneous information and bias that are not eliminated during the training and testing periods. Explain how AI hallucinations can corrupt answers to prompts. Help people grasp how AI used for research often contains material errors. Explain why they must use the time they saved using the tool to verify the outputs using old-school techniques.

I could go on about what’s wrong with AI in its current state. Instead, I ask you to employ your excellent experience and educational resources to wake people up to the actual dangers of AI.

I’m not taking away from AI’s potential or your excellent post of its history. Please show how users need a combination of wisdom and discernment to avoid the pitfalls of this addictive tool.

People deserve to know how AI can harm adults and children.

LikeLiked by 2 people

Thank you so much Grant. You are bringing up a very important topic. However, the potential harm of AI is an entire topic on itself and this post is already long and complicated. Perhaps I’ll do a post on this topic sometime in the future. I’ve read some books on the potential harm of AI that I am planning to review/post about. So what I did was add a note at the end of my post:

Note on potential harm of AI

The potential harm of AI is a related and important topic that I did not address. However, this is already a very long and complex post, and I don’t know enough about this topic (yet). To read more about this topic check the comments made by “Grant at Tame Your Book” (in comment section).

Feel free to add as much as you want about this topic in the comment section. I think you probably know more about the potential harm of AI than I do.

LikeLiked by 2 people

Thanks, Thomas, for understanding the intent of my comments.

I’ve been studying the dangers to children and adults for years. It’s exponentially more serious than most perceive. For many reasons, too few have yet to grasp the dangers.

I’m building a page on my site with a threefold goal:

1. Explain in lay terms what artificial intelligence is.

2. Give facts about how AI harms children and adults.

3. Make writers aware of the consequences of AI use.

I’ll gladly share with you whatever I’ve learned to date and look forward to your review of books on the topic.

LikeLiked by 2 people

That is great Grant and I am looking forward to reading it. Whenever your page is finished I would like to reblog it if you don’t mind (or similar).

LikeLiked by 2 people

Fantastic!

LikeLiked by 1 person

How fascinating, Thomas! I’ll show your post to my son. He talks to me about AI and algorithms. I think he’d be interested by neural networks. Interesting that artificial intelligence has been around for a long time.

LikeLiked by 2 people

Thomas has given us an excellent example of how AI works. However, please use your preferred search engine (e.g., DuckDuckGo com) to find out the adverse affects on children and the many dangers. For example, enter ‘AI caused harm to children’ and carefully read the many pages. Scary!

LikeLiked by 3 people

Thank you Grant. Very good points. Even Geoffrey Hinton speak extensively on the dangers of AI

LikeLiked by 2 people

Thank you so much Ada. Yes I’ve used many types of AI algorithms, rule based algorithms, decision trees of various kinds (A*, C4.5, C5, etc), algorithms based on evolution, as well as neural networks, but it seems deep learning neural networks (the ones with multiple hidden layers) turned out to be the most successful.

LikeLiked by 2 people

This was one of the most informative thing related to AI that I have read and the way you have laid down the facts… Amazing!!

LikeLiked by 2 people

Thank you so much for your kind words Aparna

LikeLiked by 2 people

Thank you, Thomas, for your well-presented and learned insight into the saying “there is nothing new under the sun.” I had no idea that artificial intelligence is not new. And as an aside, I was thinking about AI and how it is works (since I don’t know a thing about it) just the other day, in fact. Thank you for the great explanations.

LikeLiked by 2 people

Thank you so much for your kind words Suzette. Yes everyone is talking about it now making a lot of people think it is something that just happened but it has a long history. The biggest recent change is probably that computers has finally gotten powerful enough that LLM’s have become truly commercially viable.

LikeLiked by 2 people

You are most welcome, Thomas. Cheers.

LikeLiked by 2 people

Cheers Suzette 🥂

LikeLiked by 1 person

I was aware of some of the early developments that led to present day AI, but those weren’t in the publc view the same way,

I’ve heard interviews with Geoffrey Hinton where he predicted terrible things if AI is developed without human qualities. He said it needs to have a maternal instinct to prevent it from destroying us.

LikeLiked by 2 people

Without government regulation, Big Tech executives have shown little interest in protecting children and adults. The AI industry prioritizes profit over safety. For example, Sam Altman loosened ChatGPT’s restrictions on erotica in October 2025, reversing an earlier statement in August not to allow sexual images.

LikeLiked by 2 people

Thank you Grant. You are certainly bringing up good points. That is terrible.

LikeLiked by 1 person

Tech bros without any maternal instincts. Or even paternal?

LikeLiked by 2 people

Yes you are right Audrey. I think the continued increase in the speed and power of computers is really what has brought even fairly old AI algorithms to the point where they are truly commercially viable. You are certainly right about Geoffrey Hinton. He is very concerned about the potential dangers of AI Grant below is bringing up some interesting points.

LikeLiked by 1 person

It’s fascinating to read the history and development of AI, Thomas.

LikeLiked by 1 person

Thank you so much for your kind words Denise.

LikeLiked by 1 person

I think it’s an understatement to say that AI is a double-edged sword.

LikeLiked by 1 person

Yes you are right Anneli. Definitely a double-edged sword

LikeLiked by 1 person

This was a fascinating history of how AI has developed and like many people, I didn’t realize it goes that far back. Grant has raised the issue of misuse and that is a sobering thought, especially when you see all the AI fakes presented as news, music videos, etc. It’s getting more difficult to differentiate between those and real material!

LikeLiked by 1 person

Yes you are right Debbie. It is a long fascinating story but Grant raised an interesting topic about misuse and potential harm. I am also worried and I am tired of seeing all the deep fakes. Some are obvious and admit to being deep fakes for comic relief. However, there are many deep fakes that intentionally try to fool people and the better they get though more they destroy trust, our most valuable currency.

LikeLiked by 1 person

Exactly! And Facebook seems to be one of the worst offenders now. I keep reporting fake stuff, but nothing ever gets done about it! 🙄

LikeLiked by 1 person

Yes I think the Maryl Streep deep fake was around for a couple of months and I’ve seen the Steve Martin deep fake for several months now, every time I login, and the Neil de Grasse Tyson claiming Earth was flat was going around for a while. They are not keeping after that, but if I post a beer review, I am sometimes blocked instantly for selling beer without a license (which I’ve never done).

LikeLiked by 1 person

An excellent post, Thomas. I still feel all those things about AI is mind-boggling to me!

LikeLiked by 1 person

Yes one thing that is different between a decision tree and a neural network is that you can follow a decision tree backwards and trace how it arrived at its decision and what caused it to look the way it does. You can correct something in the tree after training because you can see what is wrong (I have done that). Neural networks on the other are a lot more mysterious. You cannot know exactly know why it got the weights it did during training. It is just a mess of billions of numbers changing during training (if it is a large network). So it is like a black box that even the creator/engineer don’t really know how it works. However, modern neural networks are better than decision trees.

LikeLiked by 1 person

Lulu: “Our Dada says he used to play with something called Eliza that would pretend to be a therapist when you talked to it. All it did was echo what you said back at you, pretty much, but it was still surprisingly like talking to a person.”

Java Bean: “Of course, our Dada was never much for talking to people a lot, so …”

LikeLiked by 1 person

Oh yes I remember Eliza. The old chatbot from the 1960’s. I tried to create something similar in the 1980’s but it was not as good as Eliza. My teacher told me it was stupid. Anyway, that is an interesting memory Java Bean.

LikeLiked by 1 person

I always used to like how Eliza would say, “We were discussing you, not me” if you tried to get it to reference itself. The modern LLMs are also all about discussing you, not them, but they are more sophisticated about it …

LikeLiked by 1 person

Ha ha you are right. Eliza was very primitive Artificial Intelligence using pattern matching without neural networks. It was long before Rumelharts back propagation algorithm.

LikeLiked by 1 person

Thanks for the interesting post. Machine learning and neural networks have certainly made lots of progress in recent decades. It’s probably worth a post in its own right, but it would also be interesting to hear about the current thoughts on whether computers could achieve self-awareness and sentience — and of course, the related problem of determining if they’ve actually achieved it, or have they simply learned enough from observing humans that they can mimic it very well.

LikeLiked by 1 person

Thank you David, you are right, both the recent advances and the thoughts on whether computers could achieve self-awareness and sentience are quite shocking things. I’ve read some books on AI and self awareness so maybe I’ll review one of those.

LikeLiked by 1 person

I would definitely be interested in hearing your thoughts about one of the books about AI and self-awareness.

LikeLiked by 1 person

Thank you so much David

LikeLiked by 1 person

Hi Thomas, this is interesting. I have know about AI all my life which makes sense given the timelines in this post. However. The Ai I grew up with isn’t what we have. It was the bots in Star Wars and later Terminator. I think AI is filling the internet with what I call white noise which is a lot of half truths and unverified information. I think AI in its current form is a negative thing for society.

LikeLiked by 1 person

Thank you Robbie. You are right AI has evolved quite a bit. AI was used in a lot of interesting applications in the past, game playing, chess robots, robot motion, planning, writing code automatically, optical character recognition / reading text in an image, image processing, computer vision, but the new stuff we see with the large language models that everyone is interacting with is a new phase. It is largely the same technology though (neural networks with hidden layers using the Rumelhart backpropagation algoritm), but with much more computer power.

Yes this new phase of AI comes with a lot of negative aspects like you mention. I don’t know where this will lead. I should say that I did not discuss this because it is an entire topic on its own and my post was long enough. However, Grant at Tame Your Book in the comments above is working on a post on this topic and he allowed me to reblog once he is done.

LikeLiked by 1 person

I look forward to seeing what Grant’s post says. Thanks, Thomas.

LikeLiked by 1 person

Yes me too. Thank you Robbie.

LikeLiked by 1 person

wow! you always give my mind such a workout!! thanks, Linda 🙂

LikeLiked by 1 person

Thank you so much for your very kind words Linda

LikeLiked by 1 person

Always a pleasure! 🙂

LikeLiked by 1 person

I knew that AI had been around for a long time however my knowledge was very limited and you have fleshed that out amazingly well so thank you, Thomas…In my head I have also had many reservations stemming from how the internet although an amazing source of knowledge in the wrong hands can be terrifying and therefore I have no illusions that AI is already going the same way.

I was interested in Grants comments about the dangers some which mirror my thoughts…A good post Thomas and we would all do well to apply caution as would governments as it is certainly gaining momentum as some pace now…

LikeLiked by 1 person

Thank you so much Carol for your kind words. I am also looking forward to Grants post on this issue. I think it is wise to be cautious about AI. There are so many potential risks as well as problems that are already popping up. Like any new technology, how we use it is what matters to outcome. We can use technology in ways that are harmful. But like, Yuval Noah Harrari says, not only can we misuse AI, but AI can use and misuse itself (other AI), which is a new phenomenon. It is an autonomous agent. Well I am not quoting exactly but he said something like that.

LikeLiked by 1 person

But AI can misuse itself is a scary comment it sort of conveys that AI can think for itself…now I am scared ….

LikeLiked by 1 person

Yes, I agree, that is a scary part that Yuval Noah Harrari often speaks about as well as the book Super Intelligence by Nick Bostrom. I may review that book at some point. I can think of a couple of movies (well series of movies) on that topic, Terminator (Arnold Schwarzenegger), and the Matrix. In Terminator AI / Skynet becomes self-aware / conscious and launch a surprise attack on the human race. It probably won’t happen but it is a realistic scenario. This is something we need to start discussing.

LikeLiked by 1 person

I agree about the movie’s and that discussion is required and also at a higher level and safeguards put in place…

LikeLiked by 1 person

About safeguards, Geoffrey Hinton the co-inventor of the Rumelhart backpropagation algorithm and the 2024 physics Nobel Prize winner (I mentioned him in my post) suggested the same thing. The safeguard he suggested was to embed a mother instinct in the AI. However, if we want to use AI against our enemies in a war, a mother instinct may get in the way, so in that case we may want to do that. But you are right we need safeguards.

LikeLiked by 1 person