Superfact 90: Large Language Models (LLMs) such as ChatGPT, Claude, Llama and Gemini are just one type of popular applications of Artificial Intelligence among hundreds of applications of Artificial Intelligence, and LLMs represents just one branch of Artificial Intelligence.

LLMs are currently the most popular “viral” AI. We can all access LLMs in our browsers. This has created the common misconception that Artificial Intelligence is the same as Large Language Models. However, LLMs represent only one branch of narrow AI systems designed to perform specific tasks.

Applications of Artificial Intelligence other than what Large Language Models are used for include robotics, robot motion planning, advanced control systems using AI, self-driving cars, image processing, optical character recognition, classification, facial recognition systems, medical imaging diagnostics, game playing such as chess playing computers, financial fraud detection, cybersecurity, investment robots, route optimization, mathematical proof generation, recommendation algorithms, virtual assistants, programming code generation, smart home devices, drug discovery, and that is just for starters.

There are probably many applications and types of Artificial Intelligence that we have not yet invented.

LLMs use large neural networks with many hidden layers, so called deep learning algorithms, and they employ the Rumelhart backpropagation learning algorithm invented by David Rumelhart, Geoffrey Hinton, and Ronald Williams. Clearly neural networks with multiple hidden layers and using the Rumelhart backpropagation algorithm are incredibly successful but it is just one of many kinds of Artificial Intelligence algorithms, and who knows what we will see in the future. Related to this post is my previous post Artificial Intelligence is Not New. We have only just begun.

I consider this a super fact because it is true, kind of important, and I believe that the multitude of Artificial Intelligence algorithms and applications is a surprise to many.

The many Artificial Intelligence Algorithms

Due to the great improvement and success of Neural Networks, they have become very popular and Large Language Models use very large Neural Networks with multiple hidden layers (employing the Rumelhart back propagation algorithm). You can read more about that here.

However, there are many other AI algorithms, hundreds, maybe thousands. One example is genetic algorithms. These are types of algorithms that mimics evolution. They iteratively select a set of the best candidate solutions, then combine them (crossover), and also add random changes (mutation) to generate new solutions. Then select the best solutions and then you do it again. Selecting the best solutions corresponds to natural selection. I tried out such algorithms at my work, and over many iterations / generations you can get some impressive results. It is easy to understand how a complex organ such as an eye can evolve in a similar way in nature.

One type of decision tree based machine learning algorithm that I used specifically for classification tasks at work was C4.5 and C5. More specifically I used this type of machine learning algorithm for evaluating the results from automatic mail sorting systems. Basically, how well can a result from a certain machine be trusted. I don’t remember exactly but my classes were something along the line of super reliable, pretty reliable, average, and this result probably sucks. Other examples of this type of machine learning are ID3, Random Forest, Gradient Boosting, and CART. These types of algorithms are still very popular.

One advantage of using decision tree based machine learning over neural networks for the same task is that when a decision has been made you can follow the decision tree backwards and see why a decision / classification was made. In fact, if you have less than 100 parameters you could likely do it over a lunch. When a neural network makes a decision all you have is a large bunch of numbers that were spit out by an algorithm that looped possibly thousands of times and changing all the numbers every time. You can’t backtrack and figure out exactly how a decision was made. You just have to trust the neural network. The advantage of a neural network in this situation is that if it is trained properly, it is likely to have a better result.

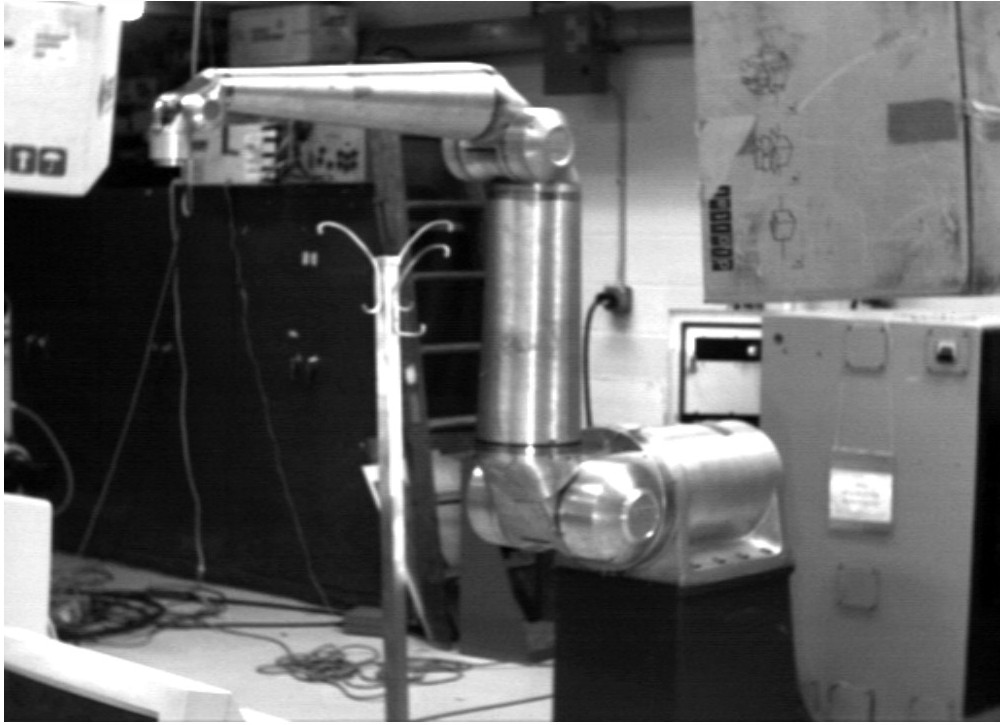

Another type of algorithm used in Artificial Intelligence is search algorithms. For robot motion planning I used an algorithm called A* or A-star, which is a very efficient pathfinding algorithm. It comes in dozens of variants and there are hundreds of other types of search algorithms.

These are just a few examples, but there’s also knowledge based agents, AI-agents with reinforcement learning algorithms, algorithms based on Bayes’ Theorem, Vector Machines, Markov Decision Processes, clustering algorithms, K-nearest neighbor (KNN) algorithm, simulated annealing, hill climbing, the ant colony optimization algorithm, and of course neural networks and there are also many types of neural networks. I used a relatively unknown form of artificial intelligence called reflex control for my robotics research. The point is, there is zoo of artificial intelligence algorithms out there. Deep learning neural networks are very popular AI algorithms but far from the only ones.

My Personal Experience with Artificial Intelligence

In 1986, when I was in college in Sweden, I took a class in the LISP programming language. LISP was the first Artificial Intelligence programming language, and it was invented in 1958. In 1987, as a university level exchange student, I took a class called Artificial Intelligence at Case Western Reserve University. That same year I also took a class called Pattern Recognition which introduced neural networks to me.

In 1986 a landmark paper was published by David Rumelhart, Geoffrey Hinton, and Ronald Williams introducing the Rumelhart backpropagation algorithm. Geoffrey Hinton received the Nobel Prize in physics in 2024. David Rumelhart and Ronald Williams were both dead and could therefore not receive the Nobel Prize. The Nobel Prize was also given to John J. Hopfield, another pioneer in neural networks. He invented the Hopfield network. You can read more about neural networks and the Nobel Prize in physics in 2024 here.

The Rumelhart backpropagation algorithm was a giant leap forward for neural networks and for Artificial Intelligence and it is the algorithm used by ChatGPT and the other large language models. Geoffrey Hinton is often interviewed in media and often presented as the father of Artificial Intelligence. He is not, but he us arguably partially responsible for the greatest leap forward in neural networks, as well as Artificial Intelligence.

In the pattern recognition class, we used the Rumelhart backpropagation algorithm on a simple neural network to read images with text. Later I did research in the field of Robotics where I implemented various Artificial Intelligence algorithms as mentioned above. I have a PhD in Applied Physics and Electrical Engineering with specialty in Robotics. Later I would use artificial intelligence algorithms in my professional career.

My previous posts on Artificial Intelligence, “Artificial Intelligence is Not New”, and “The Nobel Prize in Physics and Neural Networks”, describe how neural networks work in greater detail.

Note on potential harm of AI

The potential harm of AI is a related and important topic that I did not address. I don’t know much about this topic. However, Grant from “Grant at Tame Your Book” have written an excellent, well research and professional post about this issue called Don’t Confuse AI with a Benign Tool. Please check it out.

Great, informative post! As a Star Wars fan, I’ll say seeing C-3PO and R2-D2 here was a cherry on top.

LikeLiked by 2 people

Thank you so much Alex. C-3PO and R2-D2 are certainly good examples of AI and I am glad you thought it was a cherry on top.

LikeLiked by 1 person

A very interesting and engaging post, Thomas. Thank you.

LikeLiked by 1 person

Thank you so much for your kind words Lynette.

LikeLiked by 1 person

Wow, my son will love this! I had no idea LLMs use large neural networks with many hidden layers. What an informative post!

LikeLiked by 3 people

Thank you so much for your kind words Ada. It would be very exciting if your son loved this post. Yes neural networks with multiple hidden layer has been really successful lately. That would have been hard to guess a few decades ago when one hidden layer only was the rule. People thought adding more hidden layers would not do any good.

LikeLiked by 1 person

I am impressed by your vast knowledge and experience, Thomas. Thank you for this eye-opener, I thought that the term “AI” meant only one form of programming and/or technology. Excellent share.

LikeLiked by 4 people

Thank you so much for your kind words Suzette. Yes my guess was that the many other AI algorithms are unknown while everyone is talking about the large LLMs like chatGPT.

LikeLiked by 1 person

Hi Thomas, this is interesting and relatable. I see this AI trying to push into my life every time I use my phone or laptops.

LikeLiked by 1 person

Yes you are right. AI is spreading and popping up in new places.

LikeLike

As Suzette said, your expertise on the subject of AI is impressive. I had no idea of the depth and scope, and appreciate the education. Looking forward to learning more!

LikeLiked by 3 people

Thank you so much for your very kind words Debbie. It is an interesting field, but we’ll see if it does us mostly good or harm.

LikeLiked by 1 person

Maybe this is related and maybe not, but can you tell me, Thomas, who decides what the prompts are for texting? If I type one word on my phone, there are three words that pop up at the top of the keyboard area to suggest what I might want to type next and often it helps to speed up the writing of the message. But I have noticed that in some cases, the prompts are politically motivated, and that really makes me angry. Any thoughts on this?

LikeLiked by 2 people

Thank you Anneli. The autocomplete or predictive text that you are talking about is indeed AI or machine learning software that is on your phone, which reminds that, that is yet another application of AI (and it does not require a datacenter, just your phone’s OS). It uses your personal usage history, basically everything you type on the phone keyboard, your personal contacts and context, to come up with good guesses as to what you will type next. I did not know that it was politically motivated but you can turn it off. I turned off this feature because I am writing in three languages (English, Swedish, French) and it was just really bad in my case.

LikeLiked by 1 person

I will have to try to turn it off. It is so disgusting and it certainly does not reflect my views , or if it is using my personal usage or history, it is doing so in a misguided way. For example, when I write the word “kill,” the next word suggests whom I should kill. No matter how strongly I feel about any politician, I would never suggest killing anyone. There are some sick people out there and one of them is hiding in my phone (Mr. No Intelligence). But thank you very much for your help. I always wondered what gremlin is doing this. I understand what you mean about the prompts being a nuisance when you write in a different language. I can’t even make up a creative word of my own without the gremlin changing it to what it thinks it should be. Very frustrating. So far, AI does not impress me.

LikeLiked by 2 people

Yes it sounds like you should turn it off. Even if AI can make good guesses it can also make some really silly guesses that no human would ever make. It lacks true intelligence, it is still largely a calculator. That might change one day but for now it is very risky to blindly trust AI. The real world may have unusual situations for the AI that it has not been sufficiently trained on and when that happens squirrels do a better job. This is something, for example, the people at Meta does not seem to understand. Their AI bots are failing miserably, leading to bizarre decisions, and they seem to be clueless about it. So again it is probably best to turn it off.

LikeLiked by 1 person

By any chance did you catch Dario Amodei and Anthropic staff on 60 minutes- if not it is a very worthy view. It’s available on YouTube as well. I learned a lot!

LikeLiked by 1 person

No I did not. That sounds very interesting. I will watch it when I get a chance. Thank you so much for the suggestion Violet.

LikeLiked by 1 person

Thanks for the informative and insightful post about large language models and how they fit into the whole AI spectrum. I found that very helpful.

LikeLiked by 2 people

Thank you so much for your kind words David. I appreciate your support.

LikeLiked by 1 person

Most interesting, Thomas. Also loved to see those two excellent robots – C-3PO and R2-D2 – sweet!

LikeLiked by 2 people

Thank you so much Chris. Yes I think C-3PO and R2-D2 represent the good side of AI.

LikeLiked by 1 person

Thanks, Thomas. I look forward to your take on the actual and potential harm of using AI without adequate guardrails and legal recourse.

As linked on my homepage, I’ve curated nearly 100 articles outlining the many dangers, especially for children and the mentally vulnerable. I don’t pretend that I’m an AI expert. However, the number of legal actions underway, the AI industry’s outright theft of copyrighted material, and the reported suicides suggest there’s much to discuss regarding the actual and potential harm of AI.

The one thing no one can gloss over is the many AI industry executive choices. They have a track record of choosing profit over safety. That’s especially troubling when technology reaches the artificial superintelligence capability.

Regrettably, the marketing of ChatGPT, Claude, Gemini, and the other AI models seems to gloss over the dangers. Thanks for bringing to light what people need to know to protect their children, privacy, and livelihoods.

LikeLiked by 2 people

Also, on my Don’t Confuse AI with a Benign Tool page, if you see any inaccuracies in the wording, please let me know. As you’ve noted in your post, artificial intelligence has many complex facets. When breaking down that complexity into lay terms and using AI as a general term, don’t want to water down the dangers by an inadvertent error. Thanks again, Thomas!

LikeLiked by 1 person

I did not see any inaccuracies. Thank you so much Grant. It is an excellent and very professional post.

LikeLiked by 1 person

Your too kind, Thomas, and thank for linking to the page.

LikeLiked by 1 person

Thank you so much Grant for your great article

LikeLiked by 1 person

BTW Grant I updated both this post and my Artificial Intelligence is Not New post include a link to your Don’t Confuse AI with a Benign Tool page.

LikeLiked by 1 person

Thank you, Thomas!

LikeLiked by 1 person

And I added a new post “The Dark Side of Artificial Intelligence” which was just a link to Don’t Confuse AI with a Benign Tool page and a couple of images. Tell me if you would like me to change or add something.

LikeLiked by 1 person

Excellent post, Thomas, and I added a comment there.

LikeLiked by 1 person

Thank you so much Grant

LikeLiked by 1 person

Wow I just read your post Don’t Confuse AI with a Benign Tool. That is very impressive and very well done, as well as eye opening and alarming. It is a topic I don’t/didn’t know much about I you certainly did a very thorough job. Like we discussed I will add the link to my two AI posts and add a new post using your link. I did not see a reblog option. Incidentally, I realized I was not subscribed to your site. I thought I was, but I fixed that.

LikeLiked by 1 person

I hope the page breaks down, but does not dumb down the essence of how artificial intelligence in its many forms affects lives around the globe. My point is to raise awareness, not police choices. The dirty little secret of choice is that it comes with positive and negative consequences.

LikeLiked by 1 person

Yes that is a very good way to put it. Your article is certainly raining awareness and our choices can have both positive and negative consequences, and we need to be aware of what those can be.

LikeLiked by 1 person

This is amazingly comprehensive, Thomas and something to come back to.You have so much knowledge on the subject which I know so little about. I’m not a fan of feeling invaded by prompts that constantly want me to update to AI. I don’t love we are being driven to use it even if we don’t want to.

Thanks for sharing this informative post! ❤️

LikeLiked by 2 people

Thank you so much for your kind words Cindy. I do not know much about the dark side of AI, especially the dark side LLMs. However, Grant in the comments above have written an excellent post about this topic that I am going to link to and make a separate post for. Stay tuned.

LikeLiked by 1 person

Ai can sure be a help and hinder I’ve seen damage it’s caused people and help.. what is so sad is I hear people say it’s their favorite relationship.. yikes!!!😱

LikeLiked by 2 people

I can understand that a dog can be someone’s favorite relationship but AI, that is a little bit strange. Yes Grant (see his article, link at the end of post) did a great job describing the negative aspects of AI.

LikeLiked by 1 person

I agree , but I’ve heard it more than once.

LikeLiked by 1 person

Yes that is sad

LikeLiked by 1 person